佛山网站建设价格免费广告推广平台

DAY 52 神经网络调参指南

知识点回顾:

- 随机种子

- 内参的初始化

- 神经网络调参指南

- 参数的分类

- 调参的顺序

- 各部分参数的调整心得

作业:对于day'41的简单cnn,看看是否可以借助调参指南进一步提高精度。

对于day41的CNN进行调参:

早停类:

# 早停类

class EarlyStopping:"""如果验证损失在给定轮数内没有改善,则提前停止训练"""def __init__(self, patience=7, verbose=False, delta=0):"""参数:patience (int): 在最后一次验证损失改善后等待的轮数默认: 7verbose (bool): 如果为True,每次验证损失改善时打印消息默认: Falsedelta (float): 监控指标的最小变化,以视为改善默认: 0"""self.patience = patienceself.verbose = verboseself.counter = 0 # 记录验证损失未改善的连续轮数self.best_score = None # 记录历史最佳分数(通常是负的验证损失,因为我们希望最大化这个值)self.early_stop = False # 触发早停的标志self.val_loss_min = np.Inf # 记录历史最低验证损失self.delta = delta # 改善阈值def __call__(self, val_loss, model):score = val_lossif self.best_score is None:self.best_score = scoreself.save_checkpoint(val_loss, model)elif score < self.best_score + self.delta:self.counter += 1print(f'早停计数器: {self.counter} / {self.patience}')if self.counter >= self.patience:self.early_stop = Trueelse:self.best_score = scoreself.save_checkpoint(val_loss, model)self.counter = 0def save_checkpoint(self, val_loss, model):'''当验证损失降低时保存模型'''if self.verbose:print(f'测试集损失降低 ({self.val_loss_min:.6f} --> {val_loss:.6f})')# torch.save(model.state_dict(), 'checkpoint.pth')self.val_loss_min = val_loss测试最大允许的 batch size 函数

def find_max_batch_size(train_dataset, device, model):max_batch_size = 512try:train_loader = DataLoader(train_dataset, batch_size=max_batch_size, shuffle=True)for data, target in train_loader:data, target = data.to(device), target.to(device)output = model(data)breakexcept RuntimeError as e:print(f"最大 batch size 导致显存不足,尝试减小")while True:max_batch_size //= 2try:train_loader = DataLoader(train_dataset, batch_size=max_batch_size, shuffle=True)for data, target in train_loader:data, target = data.to(device), target.to(device)output = model(data)print(f"最大允许的 batch size 为 {max_batch_size}")breakexcept RuntimeError:continuereturn max_batch_size配置优化器和学习率调度器函数

# 配置优化器和学习率调度器函数

def configure_optimizer_scheduler(model):criterion = nn.CrossEntropyLoss()# 设置 weight_decay 参数添加 L2 正则化optimizer = optim.Adam(model.parameters(), lr=0.001, weight_decay=0.0001) scheduler = ReduceLROnPlateau(optimizer, 'min', patience=3, factor=0.5)return criterion, optimizer, scheduler完整代码:

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np

from torch.optim.lr_scheduler import ReduceLROnPlateau # 添加这行# 设置替代中文字体(适用于Linux)

plt.rcParams["font.family"] = ["WenQuanYi Micro Hei", "sans-serif"]

plt.rcParams['axes.unicode_minus'] = False# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")# 1. 数据预处理

# 训练集:使用多种数据增强方法提高模型泛化能力

train_transform = transforms.Compose([# 随机裁剪图像,从原图中随机截取32x32大小的区域transforms.RandomCrop(32, padding=4),# 随机水平翻转图像(概率0.5)transforms.RandomHorizontalFlip(),# 随机颜色抖动:亮度、对比度、饱和度和色调随机变化transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1),# 随机旋转图像(最大角度15度)transforms.RandomRotation(15),# 将PIL图像或numpy数组转换为张量transforms.ToTensor(),# 标准化处理:每个通道的均值和标准差,使数据分布更合理transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])# 测试集:仅进行必要的标准化,保持数据原始特性,标准化不损失数据信息,可还原

test_transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])# 2. 加载CIFAR-10数据集

train_dataset = datasets.CIFAR10(root='./cifar_data/cifar_data',train=True,download=True,transform=train_transform # 使用增强后的预处理

)test_dataset = datasets.CIFAR10(root='./cifar_data/cifar_data',train=False,transform=test_transform # 测试集不使用增强

)# 4. 定义CNN模型的定义(替代原MLP)

class CNN(nn.Module):def __init__(self):super(CNN, self).__init__() # 继承父类初始化# ---------------------- 第一个卷积块 ----------------------# 卷积层1:输入3通道(RGB),输出32个特征图,卷积核3x3,边缘填充1像素self.conv1 = nn.Conv2d(in_channels=3, # 输入通道数(图像的RGB通道)out_channels=32, # 输出通道数(生成32个新特征图)kernel_size=3, # 卷积核尺寸(3x3像素)padding=1 # 边缘填充1像素,保持输出尺寸与输入相同)# 批量归一化层:对32个输出通道进行归一化,加速训练self.bn1 = nn.BatchNorm2d(num_features=32)# ReLU激活函数:引入非线性,公式:max(0, x)self.relu1 = nn.ReLU()# 最大池化层:窗口2x2,步长2,特征图尺寸减半(32x32→16x16)self.pool1 = nn.MaxPool2d(kernel_size=2, stride=2) # stride默认等于kernel_size# ---------------------- 第二个卷积块 ----------------------# 卷积层2:输入32通道(来自conv1的输出),输出64通道self.conv2 = nn.Conv2d(in_channels=32, # 输入通道数(前一层的输出通道数)out_channels=64, # 输出通道数(特征图数量翻倍)kernel_size=3, # 卷积核尺寸不变padding=1 # 保持尺寸:16x16→16x16(卷积后)→8x8(池化后))self.bn2 = nn.BatchNorm2d(num_features=64)self.relu2 = nn.ReLU()self.pool2 = nn.MaxPool2d(kernel_size=2) # 尺寸减半:16x16→8x8# ---------------------- 第三个卷积块 ----------------------# 卷积层3:输入64通道,输出128通道self.conv3 = nn.Conv2d(in_channels=64, # 输入通道数(前一层的输出通道数)out_channels=128, # 输出通道数(特征图数量再次翻倍)kernel_size=3,padding=1 # 保持尺寸:8x8→8x8(卷积后)→4x4(池化后))self.bn3 = nn.BatchNorm2d(num_features=128)self.relu3 = nn.ReLU() # 复用激活函数对象(节省内存)self.pool3 = nn.MaxPool2d(kernel_size=2) # 尺寸减半:8x8→4x4# ---------------------- 全连接层(分类器) ----------------------# 计算展平后的特征维度:128通道 × 4x4尺寸 = 128×16=2048维self.fc1 = nn.Linear(in_features=128 * 4 * 4, # 输入维度(卷积层输出的特征数)out_features=512 # 输出维度(隐藏层神经元数))# Dropout层:训练时随机丢弃50%神经元,防止过拟合self.dropout = nn.Dropout(p=0.5)# 输出层:将512维特征映射到10个类别(CIFAR-10的类别数)self.fc2 = nn.Linear(in_features=512, out_features=10)def forward(self, x):# 输入尺寸:[batch_size, 3, 32, 32](batch_size=批量大小,3=通道数,32x32=图像尺寸)# ---------- 卷积块1处理 ----------x = self.conv1(x) # 卷积后尺寸:[batch_size, 32, 32, 32](padding=1保持尺寸)x = self.bn1(x) # 批量归一化,不改变尺寸x = self.relu1(x) # 激活函数,不改变尺寸x = self.pool1(x) # 池化后尺寸:[batch_size, 32, 16, 16](32→16是因为池化窗口2x2)# ---------- 卷积块2处理 ----------x = self.conv2(x) # 卷积后尺寸:[batch_size, 64, 16, 16](padding=1保持尺寸)x = self.bn2(x)x = self.relu2(x)x = self.pool2(x) # 池化后尺寸:[batch_size, 64, 8, 8]# ---------- 卷积块3处理 ----------x = self.conv3(x) # 卷积后尺寸:[batch_size, 128, 8, 8](padding=1保持尺寸)x = self.bn3(x)x = self.relu3(x)x = self.pool3(x) # 池化后尺寸:[batch_size, 128, 4, 4]# ---------- 展平与全连接层 ----------# 将多维特征图展平为一维向量:[batch_size, 128*4*4] = [batch_size, 2048]x = x.view(-1, 128 * 4 * 4) # -1自动计算批量维度,保持批量大小不变x = self.fc1(x) # 全连接层:2048→512,尺寸变为[batch_size, 512]x = self.relu3(x) # 激活函数(复用relu3,与卷积块3共用)x = self.dropout(x) # Dropout随机丢弃神经元,不改变尺寸x = self.fc2(x) # 全连接层:512→10,尺寸变为[batch_size, 10](未激活,直接输出logits)return x # 输出未经过Softmax的logits,适用于交叉熵损失函数# 早停类

class EarlyStopping:"""如果验证损失在给定轮数内没有改善,则提前停止训练"""def __init__(self, patience=7, verbose=False, delta=0):"""参数:patience (int): 在最后一次验证损失改善后等待的轮数默认: 7verbose (bool): 如果为True,每次验证损失改善时打印消息默认: Falsedelta (float): 监控指标的最小变化,以视为改善默认: 0"""self.patience = patienceself.verbose = verboseself.counter = 0 # 记录验证损失未改善的连续轮数self.best_score = None # 记录历史最佳分数(通常是负的验证损失,因为我们希望最大化这个值)self.early_stop = False # 触发早停的标志self.val_loss_min = np.Inf # 记录历史最低验证损失self.delta = delta # 改善阈值def __call__(self, val_loss, model):score = val_lossif self.best_score is None:self.best_score = scoreself.save_checkpoint(val_loss, model)elif score < self.best_score + self.delta:self.counter += 1print(f'早停计数器: {self.counter} / {self.patience}')if self.counter >= self.patience:self.early_stop = Trueelse:self.best_score = scoreself.save_checkpoint(val_loss, model)self.counter = 0def save_checkpoint(self, val_loss, model):'''当验证损失降低时保存模型'''if self.verbose:print(f'测试集损失降低 ({self.val_loss_min:.6f} --> {val_loss:.6f})')# torch.save(model.state_dict(), 'checkpoint.pth')self.val_loss_min = val_loss# 2. 测试最大允许的 batch size 函数

def find_max_batch_size(train_dataset, device, model):max_batch_size = 512try:train_loader = DataLoader(train_dataset, batch_size=max_batch_size, shuffle=True)for data, target in train_loader:data, target = data.to(device), target.to(device)output = model(data)breakexcept RuntimeError as e:print(f"最大 batch size 导致显存不足,尝试减小")while True:max_batch_size //= 2try:train_loader = DataLoader(train_dataset, batch_size=max_batch_size, shuffle=True)for data, target in train_loader:data, target = data.to(device), target.to(device)output = model(data)print(f"最大允许的 batch size 为 {max_batch_size}")breakexcept RuntimeError:continuereturn max_batch_size# 配置优化器和学习率调度器函数

def configure_optimizer_scheduler(model):criterion = nn.CrossEntropyLoss()# 设置 weight_decay 参数添加 L2 正则化optimizer = optim.Adam(model.parameters(), lr=0.001, weight_decay=0.0001) scheduler = ReduceLROnPlateau(optimizer, 'min', patience=3, factor=0.5)return criterion, optimizer, scheduler# 初始化模型

model = CNN()

model = model.to(device) # 将模型移至GPU(如果可用)# 5. 训练模型(记录每个 iteration 的损失)

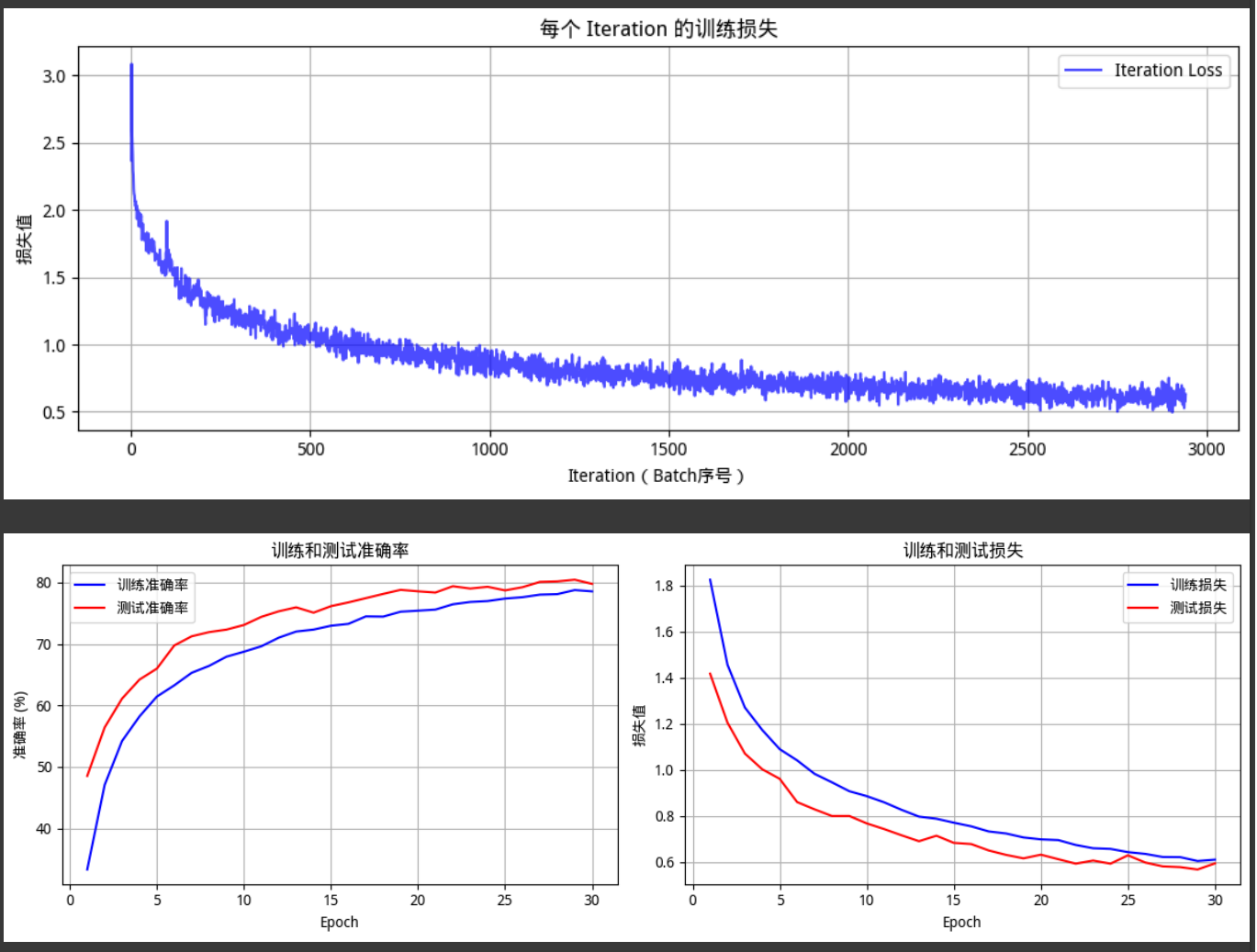

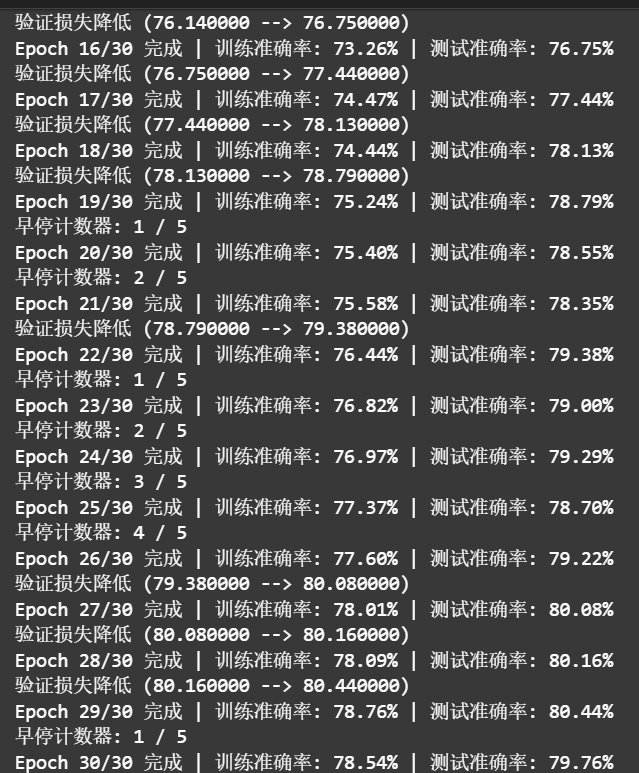

def train(model, train_dataset, test_dataset, device, epochs):model.train() # 设置为训练模式max_batch_size = find_max_batch_size(train_dataset, device, model)criterion, optimizer, scheduler = configure_optimizer_scheduler(model)early_stopping = EarlyStopping(patience=5, verbose=True)train_loader = DataLoader(train_dataset, batch_size=max_batch_size, shuffle=True)test_loader = DataLoader(test_dataset, batch_size=max_batch_size, shuffle=False)# 记录每个 iteration 的损失all_iter_losses = [] # 存储所有 batch 的损失iter_indices = [] # 存储 iteration 序号# 记录每个 epoch 的准确率和损失train_acc_history = []test_acc_history = []train_loss_history = []test_loss_history = []for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 移至GPUoptimizer.zero_grad() # 梯度清零output = model(data) # 前向传播loss = criterion(output, target) # 计算损失loss.backward() # 反向传播optimizer.step() # 更新参数# 记录当前 iteration 的损失iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(epoch * len(train_loader) + batch_idx + 1)# 统计准确率和损失running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 每100个批次打印一次训练信息if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 单Batch损失: {iter_loss:.4f} | 累计平均损失: {running_loss/(batch_idx+1):.4f}')# 计算当前epoch的平均训练损失和准确率epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / totaltrain_acc_history.append(epoch_train_acc)train_loss_history.append(epoch_train_loss)# 测试阶段model.eval() # 设置为评估模式test_loss = 0correct_test = 0total_test = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_testtest_acc_history.append(epoch_test_acc)test_loss_history.append(epoch_test_loss)# 更新学习率调度器scheduler.step(epoch_test_loss)# 早停检查early_stopping(epoch_test_acc, model)if early_stopping.early_stop:print("早停触发,停止训练。")breakprint(f'Epoch {epoch+1}/{epochs} 完成 | 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%')# 绘制所有 iteration 的损失曲线plot_iter_losses(all_iter_losses, iter_indices)# 绘制每个 epoch 的准确率和损失曲线plot_epoch_metrics(train_acc_history, test_acc_history, train_loss_history, test_loss_history)return epoch_test_acc # 返回最终测试准确率# 6. 绘制每个 iteration 的损失曲线

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序号)')plt.ylabel('损失值')plt.title('每个 Iteration 的训练损失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()# 7. 绘制每个 epoch 的准确率和损失曲线

def plot_epoch_metrics(train_acc, test_acc, train_loss, test_loss):epochs = range(1, len(train_acc) + 1)plt.figure(figsize=(12, 4))# 绘制准确率曲线plt.subplot(1, 2, 1)plt.plot(epochs, train_acc, 'b-', label='训练准确率')plt.plot(epochs, test_acc, 'r-', label='测试准确率')plt.xlabel('Epoch')plt.ylabel('准确率 (%)')plt.title('训练和测试准确率')plt.legend()plt.grid(True)# 绘制损失曲线plt.subplot(1, 2, 2)plt.plot(epochs, train_loss, 'b-', label='训练损失')plt.plot(epochs, test_loss, 'r-', label='测试损失')plt.xlabel('Epoch')plt.ylabel('损失值')plt.title('训练和测试损失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()

# 8. 执行训练和测试

epochs = 30 # 增加训练轮次以获得更好效果

print("开始使用CNN训练模型...")

final_accuracy = train(model, train_dataset, test_dataset, device, epochs)

print(f"训练完成!最终测试准确率: {final_accuracy:.2f}%")

测试集准确率最好为80.44%,跟day41相差不是很大